Australia’s eSafety Commissioner, Julie Grant, has found X (formerly Twitter) guilty of serious non-compliance to a transparency notice on child sex abuse material. The commissioner has issued X with an infringement notice for A$610,500.

The commissioner first issued transparency notices to Google, X (then Twitter), Twitch, TikTok and Discord in February under the Online Safety Act 2021. Under this legislation, the commissioner has powers to require online service providers to report on how they are mitigating unlawful or harmful content.

The commissioner determined Google and X did not sufficiently comply with the notices given to them. Google was warned for providing overly generic responses to specific questions, while X’s non-compliance was found to be more serious.

For several key questions, X’s response was blank, incomplete or inaccurate. For example, X did not adequately disclose:

- the time it takes to respond to reports of child sexual exploitation material

- the measures in place to detect child sexual exploitation material in live streams

- the tools and technologies used to detect this material

- the teams and resources used to ensure safety.

How severe is the issue?

In June, the Stanford Internet Observatory released a crucial report on child sex abuse material. It was the first quantitative analysis of child sex abuse material on the public sites of the most popular social media platforms.

The researchers’ findings highlighted Instagram and X (then Twitter) are particularly prolific platforms for advertising the sale of self-generated child sex abuse material.

These materials, and the accounts posting them, are often marked by specific recurring features. They may mention particular words or phrases paired with variations on the term “pedo”. Or they might have certain hashtags or emojis in their bios. Using these features, the researchers identified 405 accounts advertising the sale of self-generated child sex abuse material on Instagram, and 128 on Twitter.

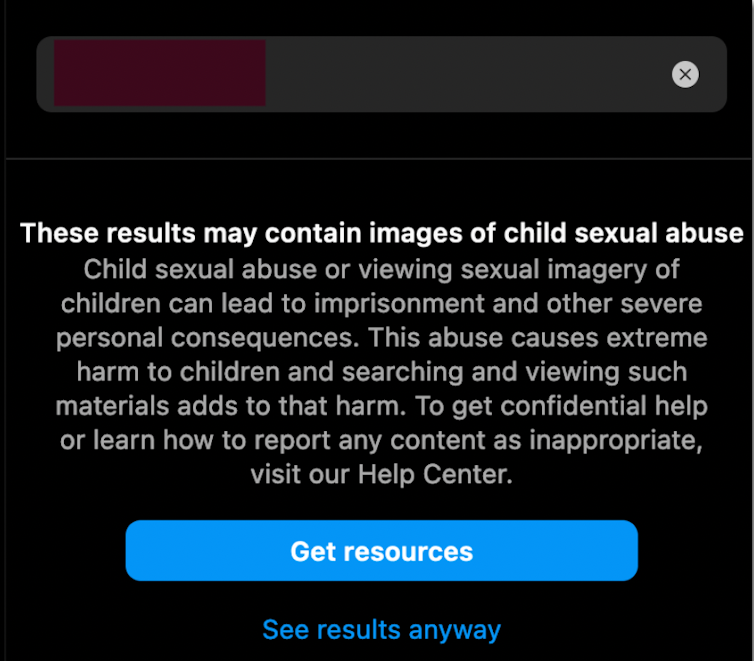

They found searching for such content on Instagram may result in an alert of potential child sex abuse material. However, the prompt still presents a clickthrough to “see results anyway”:

Stanford’s analysis found Instagram’s recommendation algorithms are particularly effective in promoting child sex abuse material once it has been accessed.

Although the researchers focused on publicly available networks and content, they also found some platforms implicitly allow the trading of child sex abuse material in private channels.

As for X, they found the platform even allowed the public posting of known, automatically identifiable child sex abuse material.

Why does X have this content?

The creation and trading of this content is commonly regarded as one of the most harmful abuses of online services.

All major platforms – including X – have policies that ban child sex abuse material from their public services. Most sites also explicitly prohibit related activities such as posting this content in private chats, and the sexualisation or grooming of children.

Even self-proclaimed free-speech advocate Elon Musk declared that removing child exploitation material was the top priority, after he took over the platform late last year.

Moderating child sex abuse material is challenging work, and can’t be done through user reporting alone. Platforms that allow nudity, such as X, have a responsibility to distinguish between minors and adults – both in terms of who is depicted in the content and who is sharing it.

They should scrutinise content shared voluntarily by minors, and ideally should also weed out any AI-generated child sex abuse material.

Musk fired hundreds of employees responsible for content moderation after taking over at X. It would seem likely the gutting of X’s trust and safety workforce would have reduced its ability to respond to both the harmful material and the eSafety notices.

Platforms could advance their moderation mechanisms by transparently sharing data with researchers. Instead, X has made this unaffordable.

Does the fine go far enough?

After years of leniency towards social media platforms, governments are now demanding increased accountability from them for their content, as well as data privacy and child protection matters.

Non-compliance now attracts hefty fines in many jurisdictions. For instance, last year US federal regulators imposed a US$150 million (A$236.3 million) fine on X to settle claims it had misleadingly used email addresses and phone numbers for targeting advertising.

This year, Ireland’s privacy regulator slapped Meta, Facebook’s parent company, with a €1.2 billion (almost A$2 billion) fine for mishandling user information.

This year the Australian Federal Court also ordered two subsidiaries of Meta, Facebook Israel and Onavo Inc, to pay A$10 million each for engaging in conduct liable to mislead in breach of Australian consumer law.

The latest fine of A$610,500, though small in comparison, is a blow to X’s reputation given its declining revenue and dwindling advertiser trust due to poor content moderation and the reinstating of banned accounts.

What happens now?

X has 28 days to settle the fine. If it doesn’t, eSafety can initiate civil penalty proceedings and bring it to court.

Depending on the court’s decision, the cumulative fine could escalate to A$780,000 per day, retroactive to the initial non-compliance in March.

But the fine’s impact extends beyond just financial implications. By spotlighting the issue of child sex abuse material on X, it could increase pressure on advertisers to pull their ads, or empower other governments to follow suit.

Earlier this month, India’s Ministry of Electronics and IT sent notices to X, YouTube and Telegram, instructing them to remove child sex abuse material for users accessing the sites from India – while threatening heavy fines and penalties for non-compliance.

It seems X is in hot water. To get out, it’ll need to make a 180-degree turn on its approach to moderating content – especially that which harms and exploits minors.![]()

- Marten Risius, Senior Lecturer in Business Information Systems, The University of Queensland and Stan Karanasios, Associate Professor, The University of Queensland

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Trending

Daily startup news and insights, delivered to your inbox.